1.What is Loopback?

What is Loopback?

The loopback interface (lo) is a virtual network interface that exists entirely in software. It has no physical hardware — no cable, no radio, no chip. It is defined at the OS level and managed entirely by the kernel.

It is called "loopback" because the packet loops back to the same machine that sent it. A packet sent to 127.0.0.1 never leaves the host. It goes down the send path, hits loopback_xmit, and gets injected straight back into the receive path on the same machine. The signal path literally loops back on itself, which is also where the classical electronics term originates (connecting an output back to an input to test a circuit).

There are three main reasons we need it:

-

Inter-process communication on the same host. Two processes on the same machine can communicate over TCP/UDP sockets via

127.0.0.1without needing any network hardware. The kernel provides all the socket semantics (buffering, ordering, flow control) at near-zero cost. -

Testing and development. A web server can be started and tested locally without an external network. Tools like

curl http://localhost:8080work even on a machine with no network card at all. -

Services that should not be reachable externally. A database or cache (e.g., Redis, PostgreSQL) can be bound to

127.0.0.1so only local processes can connect. There is no firewall rule needed since the traffic physically cannot leave the machine.

As we will see in the kernel code below, 127.0.0.1 and the machine's own IP (e.g., 172.25.0.2) are both registered as scope host in the local routing table, so both take the loopback path and share all these properties.

2.Route Lookup: From ip_queue_xmit to fib_lookup

Route Lookup: From ip_queue_xmit to fib_lookup

In previous articles we entered the network layer via ip_queue_xmit. The function first checks whether the socket has a cached route, and if not, calls ip_route_output_ports to look one up and cache it.

net/ipv4/ip_output.c:

The destination route is calculated from ip_route_output_ports, which in turn invokes ip_route_output_flow, then ip_route_output_key, and finally fib_lookup.

include/net/ip_fib.h:

fib_lookup queries both the local and main route tables, with local being checked first. For localhost traffic (e.g., destination 127.0.0.1), the local table lookup succeeds immediately and returns 0. The function exits at line 8 with res->type = RTN_LOCAL, and the -ENETUNREACH at line 15 is never reached.

-ENETUNREACH is only returned when both table lookups fail, meaning the destination IP has no matching route anywhere. A typical example is connecting to an IP in a subnet for which the machine has no route and no default gateway configured; the kernel has nowhere to forward the packet, so the connection fails with "Network is unreachable".

3.Routing to the Loopback Interface

Routing to the Loopback Interface

Back in __ip_route_output_key:

net/ipv4/route.c:

For a request pointing to a local address, dev_out is always set to net->loopback_dev, the loopback virtual network interface. The remaining workflow is the same as for traffic destined to a remote device.

4.MTU on the Loopback Interface

MTU on the Loopback Interface

In previous articles we examined whether our skb exceeds the MTU and whether fragmentation is needed.

However, the MTU of the loopback virtual network interface is much larger than that of Ethernet. Via ifconfig, we can see that a physical network interface has an MTU of only 1,500 bytes, while the loopback interface can handle up to 65,535 bytes.

5.Is Accessing a Server via Local IP Faster than 127.0.0.1?

Is Accessing a Server via Local IP Faster than 127.0.0.1?

An interesting question: is accessing a web server via the local machine's IP address faster than using 127.0.0.1?

Recall that the choice of output device is made inside __ip_route_output_key, which uses fib_lookup to determine whether the destination is local. Running ip route list table local gives:

It is a common misconception that traffic to 172.25.0.2 will be routed through eth0. To verify, we can capture packets on eth0:

This prints packets passing through the network interface. In another terminal we connect to port 8888 on that address:

No packets are captured on eth0. However, if we trace the loopback interface lo instead:

After running telnet 127.0.0.1 8888, packets appear:

The reason lies in the ip route list table local output. Note the keyword scope host on the 172.25.0.2 entry:

scope host means the kernel has registered 172.25.0.2 in the local routing table as a locally-owned address. When fib_lookup runs for destination 172.25.0.2, it hits the local table and returns res.type = RTN_LOCAL, the same result type as 127.0.0.1. The dev eth0 notation only means "this IP address is owned by eth0", not "route traffic through eth0". Once fib_lookup returns RTN_LOCAL, the kernel always redirects the packet to lo. Both addresses end up on the loopback path and see identical performance.

6.dev_hard_start_xmit and loopback_xmit

dev_hard_start_xmit and loopback_xmit

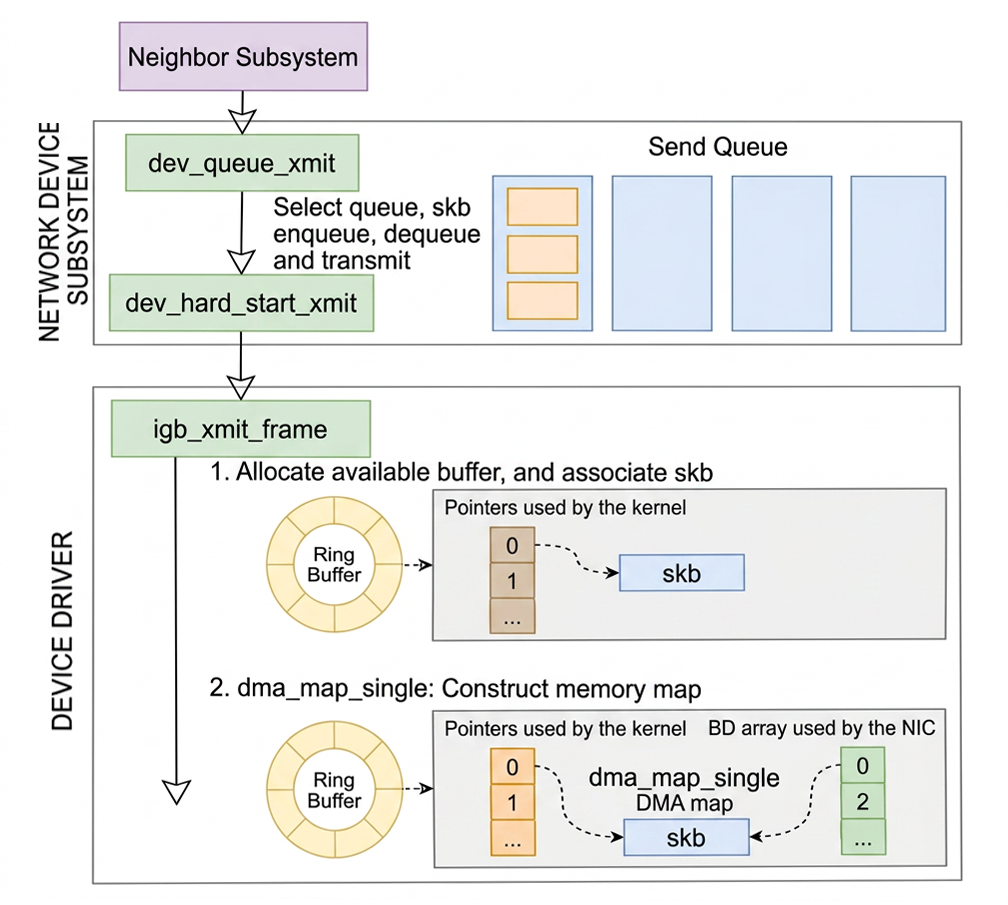

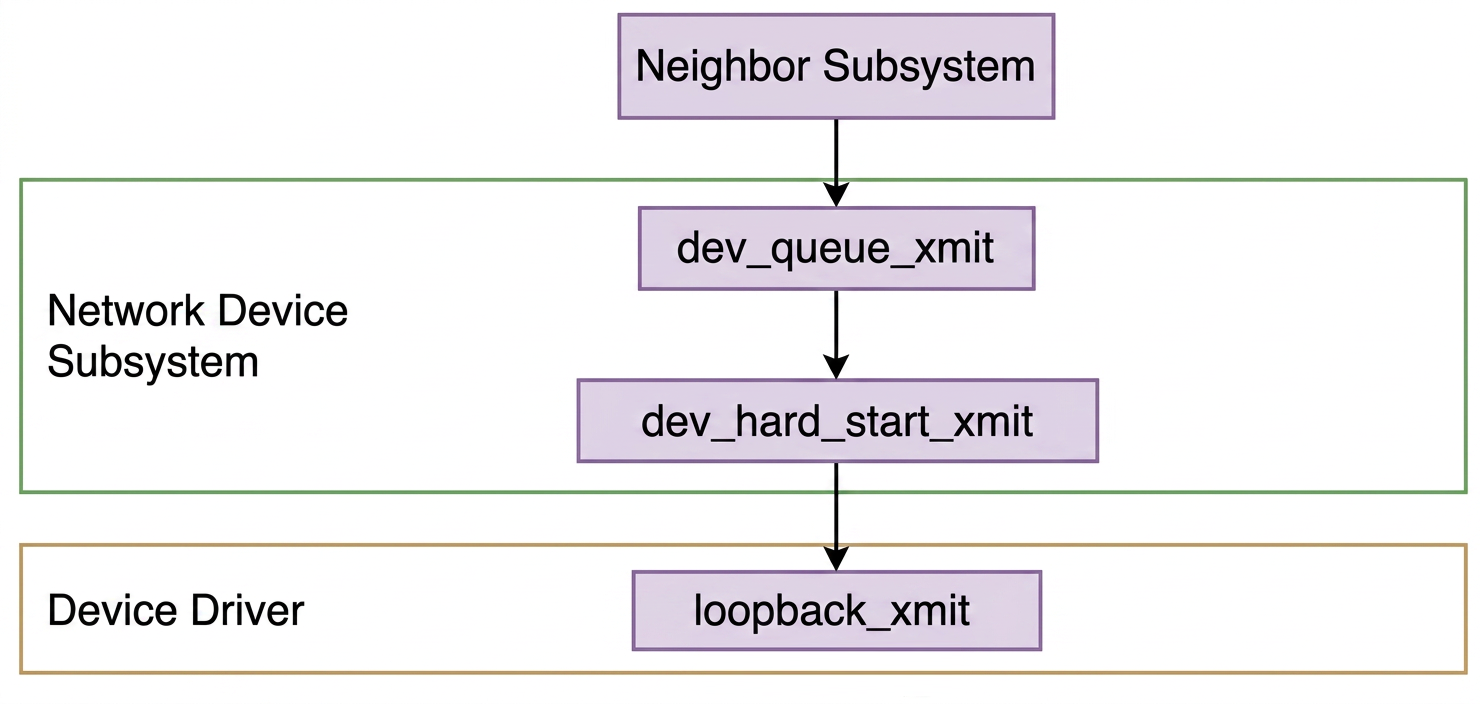

Let's go back to the network device subsystem.

When our request points to loopback, the send path eventually reaches loopback_xmit:

dev_hard_start_xmit dispatches to the device's ndo_start_xmit function pointer.

net/core/dev.c:

For the loopback device, ndo_start_xmit is registered as loopback_xmit via its net_device_ops.

drivers/net/loopback.c:

So when dev_hard_start_xmit is called with dev = net->loopback_dev, it invokes loopback_xmit through the ndo_start_xmit function pointer. This is the same dispatch mechanism used for physical NICs, just with a different registered handler.

7.Inside loopback_xmit

Inside loopback_xmit

Inside loopback_xmit, the packet is turned around and injected back into the receive path.

drivers/net/loopback.c:

skb_orphan(skb) detaches the skb from its sending socket, clearing skb->sk and calling the destructor. This is necessary because the same skb is about to be handed to the receive side; keeping the sender's socket reference would confuse accounting (e.g., socket send-buffer charges) and could lead to a use-after-free if the sender closes.

netif_rx(skb) enqueues the skb into the CPU's receive backlog queue (softnet_data.input_pkt_queue). This is the same entry point used by physical NIC drivers when they deliver a received packet, so the loopback device reuses the exact same receive path. A NET_RX_SUCCESS return means the packet was accepted; NET_RX_DROP means the queue was full and the packet was dropped. Finally, netif_rx invokes napi_schedule to trigger the receive softirq.

8.process_backlog: the Loopback Equivalent of igb_poll

process_backlog: the Loopback Equivalent of igb_poll

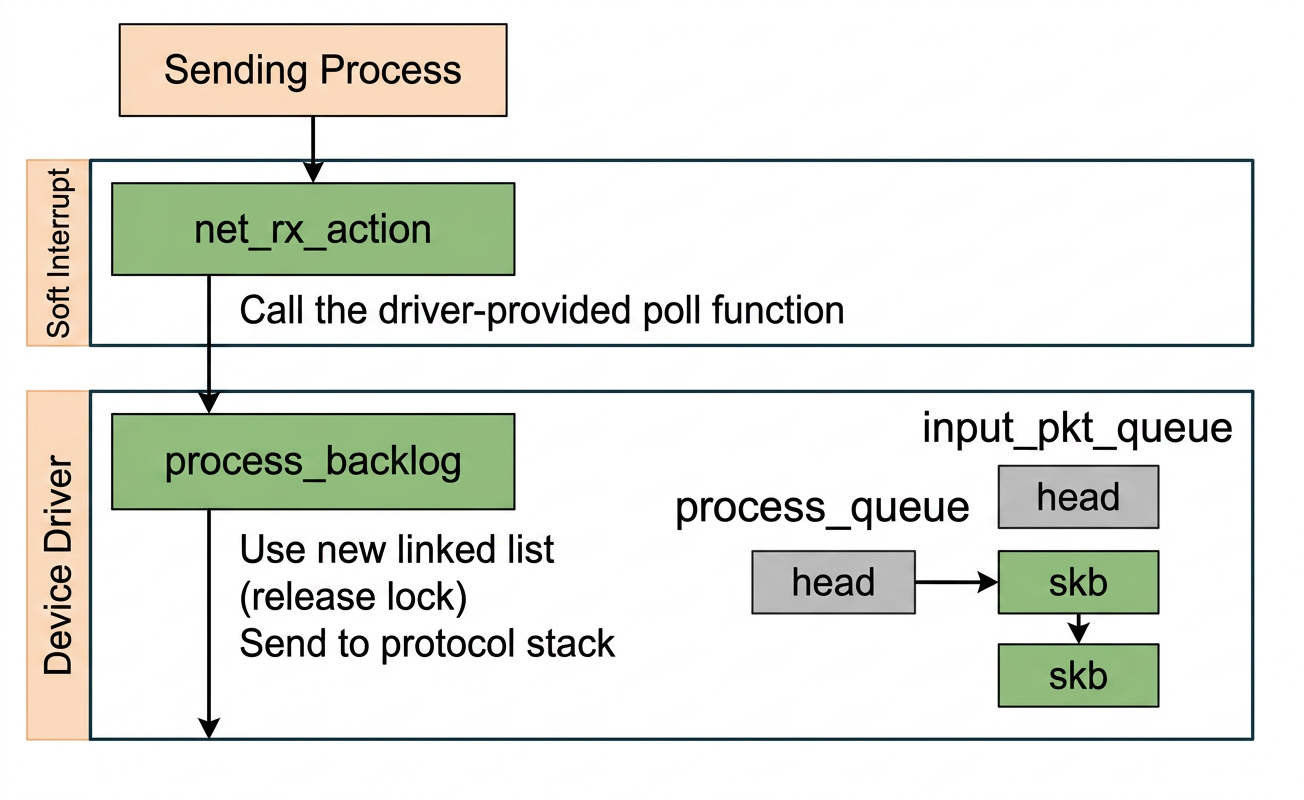

For a physical NIC (e.g., igb), the softirq handler net_rx_action polls the driver via igb_poll, which drains the hardware RX ring buffer and delivers packets up the stack.

In the loopback case, there is no hardware ring buffer and no igb_poll. Instead, netif_rx calls napi_schedule(&sd->backlog) to enqueue a special per-CPU NAPI instance onto the poll list. The .poll of that NAPI instance is wired to process_backlog during kernel initialization inside net_dev_init.

net/core/dev.c:

So when net_rx_action fires and iterates the poll list, it calls sd->backlog.poll which resolves to process_backlog.

9.Two Queues in softnet_data: input_pkt_queue and process_queue

Two Queues in softnet_data: input_pkt_queue and process_queue

Each softnet_data has two queues, input_pkt_queue and process_queue, for a deliberate reason:

input_pkt_queueis the producer queue.netif_rxappends to it under a spinlock; it may be called from interrupt or softirq context on any CPU.process_queueis the consumer queue.process_backlogdrains it without holding any lock.

net/core/dev.c:

The outer while loop first drains process_queue locklessly by calling __netif_receive_skb for each skb. When process_queue is empty, it briefly disables interrupts to atomically splice all pending packets from input_pkt_queue into process_queue, then re-enables interrupts and repeats. If input_pkt_queue was already empty at that point, __napi_complete is called to remove the backlog from the poll list and exit.

The two-queue design keeps the critical section minimal. Only the bulk splice requires the lock, not the per-packet processing. From __netif_receive_skb onward, the path is identical to the physical NIC path: the packet travels up through IP and TCP and is delivered to the receiving socket.

10.Summary

Summary

10.1.Do We Need an NIC for I/O Through 127.0.0.1?

Do We Need an NIC for I/O Through 127.0.0.1?

No. The loopback interface lo is a pure software construct. The kernel never touches any physical hardware for loopback traffic. There is no NIC, no DMA, no hardware interrupt. loopback_xmit injects the skb directly back into the kernel's receive path via netif_rx, entirely in software.

10.2.How Does a Loopback Packet Travel Through the Kernel?

How Does a Loopback Packet Travel Through the Kernel?

The table below shows where the loopback path and the remote (physical NIC) path share the same code, and where they diverge:

| Step | Loopback (127.0.0.1) | Remote (e.g., eth0) | Same? |

|---|---|---|---|

| Entry point | ip_queue_xmit | ip_queue_xmit | yes |

| Route lookup | fib_lookup checks local table, returns RTN_LOCAL | fib_lookup checks main table, returns RTN_UNICAST | same function, different result |

| Device selection | dev_out = net->loopback_dev | dev_out = eth0 | diverges here |

| Send dispatch | dev_hard_start_xmit calls ndo_start_xmit | dev_hard_start_xmit calls ndo_start_xmit | yes |

| Driver handler | loopback_xmit (software only) | igb_start_xmit (DMA to hardware TX ring) | diverges here |

| Receive enqueue | netif_rx into softnet_data.input_pkt_queue | NIC raises hardware interrupt, driver calls napi_schedule | different mechanism, same queue |

| Softirq handler | net_rx_action | net_rx_action | yes |

| Poll function | process_backlog (drains software backlog) | igb_poll (drains hardware RX ring) | diverges here |

| Deliver to stack | __netif_receive_skb | __netif_receive_skb | yes |

| Protocol processing | IP layer, TCP layer | IP layer, TCP layer | yes |

| Final delivery | enqueued into receiving socket | enqueued into receiving socket | yes |

In short:

- The path is identical from

ip_queue_xmitdown todev_hard_start_xmit, - It then diverges through the driver and receive-side poll

- And then rejoins at

__netif_receive_skband stays identical all the way to the receiving socket.

No hardware is involved at any step in the loopback case.

10.3.Is 127.0.0.1 Faster Than Using the Local Machine's IP Address?

Is 127.0.0.1 Faster Than Using the Local Machine's IP Address?

No, there is no meaningful difference.

Both addresses are registered as scope host in the kernel's local routing table. Both cause fib_lookup to return RTN_LOCAL, both are redirected to net->loopback_dev in __ip_route_output_key, and both follow the exact same loopback code path from that point on. The kernel takes identical steps for 127.0.0.1 and for the machine's own IP address.