1.Comparison

Comparison

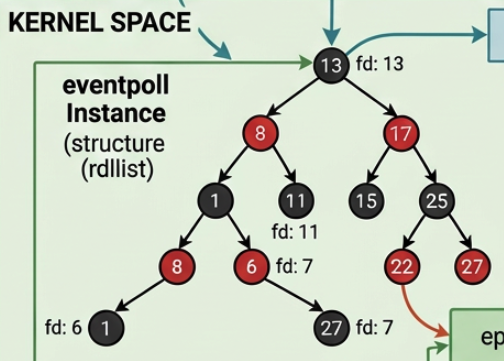

The entire mechanism discussed in Networking in Linux Kernel: Part III, How Socket Receive Data from NIC is identical for both HTTP and WebSocket connections. Both are plain TCP streams. At the kernel level, there is no "HTTP socket" or "WebSocket socket", there are only AF_INET, SOCK_STREAM, and the four concepts covered in this article:

- Socket Creation

- The Blocking Receive Path

- The SoftIRQ Enqueue Path

- The Wake-up Path

The difference between the two protocols is entirely in what user space does after recvfrom() returns.

1.1.HTTP (Short-Lived Connections)

HTTP (Short-Lived Connections)

A minimal HTTP/1.0 server illustrates the "one request, one response, close" model:

-

After

recvfrom()returns, the process handles one request, sends one response, and callsclose(). The socket is destroyed; the wait queue is freed; the TCP connection is terminated. The process loops back toaccept(), which blocks on the listening socket's queue — a completely different wait queue — waiting for the next client. -

recvfrom()is called on the connected socket (conn_fd), not the listening one. The listening socket (server_fd) has no TCP connection state — its only job is to accept new clients. It has nosk_receive_queuecarrying application data.

1.2.WebSocket (Long-Lived Connections)

WebSocket (Long-Lived Connections)

A WebSocket server keeps the conn_fd open and loops:

The kernel has no awareness of the loop. Every iteration of recvfrom() goes through the exact same five-phase flow documented above:

tcp_recvmsg

→ sk_wait_data

→ schedule()

→ tcp_v4_rcv wakes via sock_def_readable

→ autoremove_wake_function

→ try_to_wake_up

→ finish_wait

→ skb_copy_datagram_msg

The only structural difference is that close() is never called between frames, so:

- The

conn_fdand itsstruct sockremain allocated. - The TCP connection (4-tuple) stays established.

- When the next frame arrives,

__inet_lookup_skbfinds the samestruct sockagain. sock_def_readablewakes the same sleeping process again.

1.3.Summary Table

Summary Table

| Aspect | HTTP (short-lived) | WebSocket (long-lived) |

|---|---|---|

| Socket type | AF_INET, SOCK_STREAM | AF_INET, SOCK_STREAM |

| Kernel receive path | tcp_v4_rcv → tcp_queue_rcv → sock_def_readable | Identical |

| Sleep mechanism | sk_wait_data → schedule() | Identical |

| Wake mechanism | autoremove_wake_function → try_to_wake_up | Identical |

After recvfrom() returns | close(conn_fd) — socket destroyed | Loop back to recvfrom() — socket reused |

struct sock lifetime | One request | Duration of connection |

| TCP connection teardown | Immediately after response | Only on close() or FIN/RST |

| Kernel "awareness" of protocol | None — just TCP bytes | None — just TCP bytes |

The kernel does not know or care whether the bytes that flow through a SOCK_STREAM socket represent HTTP/1.1, WebSocket frames, gRPC, or raw binary. Every layer from tcp_v4_rcv down to copy_to_user is shared. The protocol interpretation is exclusively a user-space concern.

2.Terminate a WebSocket Connection

Terminate a WebSocket Connection

A WebSocket connection can end in three ways, at decreasing levels of protocol cleanliness.

2.1.Clean close — WS CLOSE handshake (RFC 6455 §5.5.1)

Clean close — WS CLOSE handshake (RFC 6455 §5.5.1)

Either peer sends a CLOSE frame; the receiver echoes it back; then the TCP connection is torn down with a normal FIN exchange:

The CLOSE frame follows the standard RFC 6455 two-byte frame header. The first byte is always 0x88 (FIN=1, RSV=000, opcode=0x8). The second byte encodes the mask bit and payload length:

Common status codes:

| Code | Name | Meaning |

|---|---|---|

| 1000 | Normal Closure | Clean shutdown, all transfers complete |

| 1001 | Going Away | Server shutting down or browser navigating away |

| 1002 | Protocol Error | Protocol-level violation detected |

| 1003 | Unsupported Data | Received data type cannot be handled |

| 1011 | Internal Server Error | Unexpected server-side condition |

After the CLOSE echo, the server calls

close(conn_fd) → tcp_close() → inet_unhash(),

removing the socket from tcp_hashinfo.ehash. The TCP_CLOSE path described above then completes the teardown.

2.2.TCP FIN — half-close without WS CLOSE

TCP FIN — half-close without WS CLOSE

If the remote peer calls close(fd) without first sending a WS CLOSE frame, the kernel sends a TCP FIN. The local recvfrom() returns 0 (EOF). The WebSocket library treats this as an abnormal close and surfaces it as an error event.

2.3.TCP RST — abrupt termination

TCP RST — abrupt termination

If the peer process crashes, the machine loses power, or a middlebox forcibly drops the connection, a TCP RST is delivered. recvfrom() returns -1 with errno = ECONNRESET. No CLOSE frame exchange is possible, and any in-flight data is lost.

| Termination mode | How recvfrom() signals it | CLOSE frame exchanged? |

|---|---|---|

| WS CLOSE handshake | Returns the CLOSE frame bytes, then 0 on next call | Yes |

| TCP FIN (no WS CLOSE) | Returns 0 (EOF) | No |

| TCP RST | Returns -1, errno = ECONNRESET | No |

3.The Browser Is Just Another C Program

The Browser Is Just Another C Program

A common misconception is that browsers have special OS-level networking primitives for WebSocket. They do not. A browser tab is an ordinary OS process. The JavaScript APIs new WebSocket(...), fetch(...), ws.send(...) are high-level wrappers over the same socket(), connect(), send(), recv() system calls that any C program uses.

The full call chain for new WebSocket("wss://example.com/chat") inside Chrome looks like:

The ws:// / wss:// scheme string is consumed entirely by the browser before any network activity occurs. It never travels over the wire. Its sole purpose is to tell the browser:

- Which internal engine to use: WebSocket stack (not the HTTP fetch stack)

- Whether to add TLS:

wss://→ callSSL_connectfirst;ws://→ skip TLS

The server never sees the scheme. It receives a plain TCP connection with some bytes that happen to start with an HTTP Upgrade request.

4.The ws:// Scheme Never Reaches the Server

The ws:// Scheme Never Reaches the Server

This is why a backend WebSocket server has no concept of ws:// or wss://. It simply:

For wss://, the TLS layer sits in front of this — either handled by the server library or terminated at a reverse proxy (nginx, Caddy) — but after TLS decryption the server sees the same plaintext HTTP Upgrade bytes.

5.JavaScript ↔ Kernel Correspondence

JavaScript ↔ Kernel Correspondence

| JavaScript / Browser API | Chromium C++ internal | Kernel system call |

|---|---|---|

new WebSocket("ws://...") | socket() + connect() | sys_socket + sys_connect |

new WebSocket("wss://...") | socket() + connect() + SSL_connect() | sys_socket + sys_connect + sys_send/sys_recv for TLS handshake |

ws.send(data) | send() / SSL_write() | sys_sendto → tcp_sendmsg |

ws.onmessage callback fires | recv() loop in network thread | sys_recvfrom → tcp_recvmsg → copy_to_user |

ws.close() | close(fd) | sys_close → tcp_close → inet_unhash() |

fetch("http://...") | socket() + connect() + send() + recv() | identical to above |

At the OS boundary, a Chrome tab doing fetch() and a C program doing connect() + send() + recv() are indistinguishable. The kernel sees the same system calls either way.