1.Overview

Overview

When a network packet arrives at a machine, the Linux kernel does not process it all in one shot. Instead it splits the work across two distinct phases:

- A hard interrupt that signals the CPU as fast as possible, and;

- A deferred soft interrupt that does the heavier lifting without blocking the CPU from other tasks.

Understanding these two phases and the subsystems that wire them together is the foundation for understanding how Linux networking works at a kernel level.

2.Hard Interrupts and Soft Interrupts

Hard Interrupts and Soft Interrupts

2.1.NIC — Network Interface Card

NIC — Network Interface Card

An NIC (Network Interface Card) is the hardware component that connects a machine to a network.

- It has a MAC address identifying it on the local network

- It receives raw electrical or optical signals and

- It converts them into bytes, and writes those bytes directly into kernel memory via DMA (Direct Memory Access).

When filling DMA into the kernel, the CPU is not involved in the data copy. When a frame is fully received, the NIC raises a hardware interrupt to notify the CPU.

In modern servers the NIC is often a PCIe add-in card or an on-board controller. The igb driver discussed later in this article targets the Intel 82575/82576 family of Gigabit Ethernet NICs.

2.2.ISR — Interrupt Service Routine

ISR — Interrupt Service Routine

When an NIC receives a packet, it raises a hard interrupt:

- CPU stops what it is doing

- NIC issues a PCIe write: "write value

33to address0xFEE00000", where0xFEE00000is the local APIC's interrupt command register - CPU reads the index

33from its local APIC - CPU consults the interrupt vector table to find the registered handler (named ISR)

For the igb driver the ISR is a handling function (registered for hard interrupt) named igb_msix_ring:

It must run in the shortest time possible because while the ISR executes, all other interrupts on that CPU core are blocked. Its only jobs are:

- Acknowledge the interrupt to the NIC hardware so it stops asserting the line.

- Call

napi_scheduleto queue the heavier processing work. - Return immediately, restoring the CPU to what it was doing.

All actual packet work — DMA mapping, sk_buff allocation, protocol dispatch — is deferred to a soft interrupt (softirq).

A softirq runs after the hard interrupt handler returns, in a context that still cannot be preempted by user processes but can be preempted by other hard interrupts. This two-phase design lets the kernel acknowledge the hardware quickly and get out of the way, while actual packet processing happens in the softer deferred phase.

2.3.The Socket Buffer — sk_buff (aka skb)

The Socket Buffer — sk_buff (aka skb)

skb is shorthand for struct sk_buff, the central data structure that represents a network packet as it travels through the kernel networking stack. When the NAPI poll function drains the hardware ring buffer, it wraps each received page in an sk_buff so that every layer above — IP, TCP, UDP — can work with a uniform interface.

An sk_buff carries:

-

Data pointers —

head,data,tail, andenddelimit the packet payload and the headroom/tailroom reserved for headers. Each layer peels or pushes headers by adjustingdataandtailwithout copying bytes. -

Protocol metadata — offsets to the MAC, network, and transport headers, plus the protocol identifier.

-

Device reference — a pointer to the

net_devicethe packet arrived on. -

Checksum and timestamp fields — used by the checksum offload engine and packet timestamping subsystem.

The reason skb allocation is listed alongside DMA mapping in the softirq phase — rather than the hard interrupt phase — is deliberate. Allocating memory (kmem_cache_alloc from the skbuff_head_cache slab) and filling in metadata takes non-trivial time. Doing it inside a hard interrupt would block all other interrupts on that CPU core. Deferring it to the softirq phase keeps the ISR minimal and the system responsive.

3.Initialising the Network Subsystem

Initialising the Network Subsystem

3.1.net_dev_init

net_dev_init

The network device subsystem is initialised via:

This macro registers net_dev_init to run during the subsys phase of kernel boot, before any device drivers load. Inside net_dev_init, two things happen that matter for packet reception.

First, a struct softnet_data is allocated for every CPU:

Second, the softirq handlers for networking are registered:

This records net_rx_action and net_tx_action in softirq_vec at the NET_RX_SOFTIRQ and NET_TX_SOFTIRQ indices respectively.

From this point on, whenever an NIC raises a hard interrupt and sets the NET_RX_SOFTIRQ bit, ksoftirqd (or the __do_softirq path) will eventually call net_rx_action.

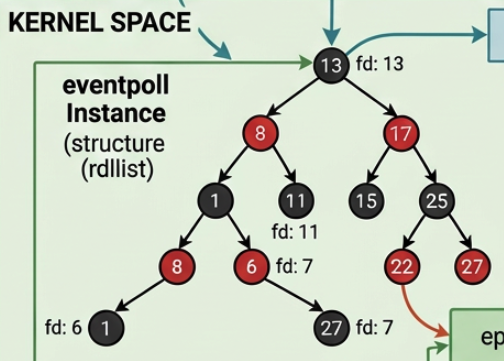

3.2.NAPI

NAPI

NAPI (New API) is the interrupt-mitigation mechanism introduced in Linux 2.6 to handle high-throughput NICs without drowning the CPU in hardware interrupts.

The problem it solves: at 10 Gbps line rate an NIC can raise tens of millions of hard interrupts per second — one per packet. Each interrupt preempts whatever the CPU was doing, saves and restores context, and runs the ISR. At high packet rates this overhead alone consumes the entire CPU, leaving no time for actual processing.

NAPI's solution is to switch from interrupt-driven reception to a polling loop once traffic exceeds a threshold:

-

The first packet on a queue triggers a normal hard interrupt.

-

The ISR calls

napi_schedule(viaigb_msix_ring), which adds the queue'snapi_structtosoftnet_data.poll_listand disables further interrupts for that queue. -

ksoftirqd(vianet_rx_action) then calls the driver's registeredpollcallback in a loop, processing up to abudgetnumber of packets per invocation without any further interrupts. -

Once the ring is empty (or the budget is exhausted), interrupts are re-enabled and polling stops.

This converts a storm of interrupts into a single interrupt followed by a bounded polling loop, which drastically reduces interrupt overhead under load while still being responsive at low traffic rates.

Each NIC queue is represented by an napi_struct:

The driver registers its poll function and a weight (typically 64) during igb_probe. When traffic arrives, NAPI orchestrates everything through this struct.

4.Soft Interrupt Types

Soft Interrupt Types

All softirq types are enumerated in include/linux/interrupt.h:

The two entries relevant to networking are NET_TX_SOFTIRQ (outgoing packet processing) and NET_RX_SOFTIRQ (incoming packet processing).

Each value is simply an index into softirq_vec, an array of struct softirq_action that maps each index to its handler function. When a hard interrupt sets a bit in the pending mask, __do_softirq picks it up and calls the corresponding entry.

5.Initialising the Protocol Stack — inet_init

Initialising the Protocol Stack — inet_init

Layer-3 and layer-4 protocol's support are brought in by:

fs_initcall runs slightly later than subsys_initcall, after filesystems are ready. inet_init lives in net/ipv4/af_inet.c and orchestrates several things:

- The

AF_INETaddress family is registered with the socket layer viasock_register. - Core protocol handlers are added to the IP layer's protocol table via

inet_add_protocol. This is wheretcp_protocolandudp_protocolare inserted:

- The per-protocol socket operations structs

inet_stream_ops,inet_dgram_opsare wired up. /procentries and sysctl knobs are registered.

5.1.udp_rcv and tcp_v4_rcv

udp_rcv and tcp_v4_rcv

Both functions are the entry point for IP packets that have been demultiplexed by the IP layer. When ip_local_deliver_finish pulls a packet off the IP queue, it looks up the transport protocol number in the protocol table and calls the matching .handler. For TCP that is tcp_v4_rcv; for UDP that is udp_rcv.

At this point the kernel has already verified the IP header and confirmed the packet is destined for this host. tcp_v4_rcv and udp_rcv then perform transport-layer processing:

- Checksum Verification

- Socket Lookup

- Queue Insertion (into

sk->sk_receive_queuefor UDP, or into the TCP receive buffer machinery) and finally - Waking any Blocked

recv()Call in user space.

These functions are executed in the context of the net_rx_action softirq, driven by ksoftirqd.

6.The NIC Driver — igb_init_module and pci_register_driver

The NIC Driver — igb_init_module and pci_register_driver

The Intel Gigabit Ethernet driver (igb) registers itself at module init time:

pci_register_driver does not immediately bind to any hardware. It tells the kernel's PCI subsystem that the igb driver exists, along with the igb_driver struct which carries:

- The

id_table: a list of PCI vendor/device IDs this driver handles. - The

probefunction pointer (igb_probe): called by the PCI core whenever a matching device is found.

When the PCI core enumerates the bus and finds a device whose ID matches the id_table, it calls igb_probe. At that point the driver interrogates the hardware, allocates resources, and registers a net_device.

The driver also registers an NAPI poll function here — this is the function that will be placed on poll_list and called from net_rx_action to drain received packets from hardware.

7.Activating the Network Card — Ring Buffer and Queues

Activating the Network Card — Ring Buffer and Queues

When an interface is brought up (e.g. ip link set eth0 up), the kernel calls through net_device_ops.ndo_open, which for igb is igb_open. The call order is:

igb_setup_all_rx_resources creates one Rx queue per CPU (or as many as configured). For each queue it calls igb_setup_rx_resources:

7.1.Two Parallel Arrays: the Ring Buffer

Two Parallel Arrays: the Ring Buffer

Two parallel arrays are allocated for each queue:

-

igb_rx_buffer[]— a kernel-side software array, one entry per descriptor slot. Each entry holds thestruct pagepointer and the DMA address of the memory page allocated for that slot. -

e1000_adv_rx_desc[]— the hardware descriptor ring itself, allocated in DMA-coherent memory shared with the NIC. Each entry is a small structure containing a physical (DMA) buffer address the NIC writes packet data into when it deposits a packet.

These two arrays together form the ring buffer. The NIC walks the descriptor ring in hardware, writing packet data directly into the kernel pages pointed to by each e1000_adv_rx_desc entry via DMA, in which the CPU is not involved in the copy.

When a descriptor is filled, the NIC raises a hard interrupt. The driver's ISR then hands control off to NAPI, which calls the registered poll function inside net_rx_action. The poll function walks the ring from the last-known head, reads each completed e1000_adv_rx_desc, retrieves the matching page from igb_rx_buffer, constructs an sk_buff, and passes it up through the network stack.

The reason for having two separate arrays is that the NIC only understands physical (DMA) addresses, not kernel virtual addresses or struct page pointers. The software-side igb_rx_buffer keeps all the bookkeeping that only the CPU needs, while the hardware-side e1000_adv_rx_desc is the minimal shared interface with the NIC.

7.2.When Data Arrive: Zero-Copy, Same Physical Memory, Two Views

When Data Arrive: Zero-Copy, Same Physical Memory, Two Views

A critical point is that no copy ever happens. The DMA address stored in e1000_adv_rx_desc[i] and the struct page* stored in igb_rx_buffer[i] refer to the exact same physical RAM page, just from two different angles:

struct page is the kernel's bookkeeping descriptor for one physical memory page (typically 4 KB). It is a small struct that records ownership, reference count, and flags, and from which page_address() can derive the virtual address.

The 4 KB of packet data live directly in physical RAM; struct page is just the library card for that memory.

The full lifecycle of a single slot is:

-

Setup (

igb_setup_rx_resources) —alloc_page()allocates a physical page, itsstruct page*is stored inigb_rx_buffer[i], anddma_map_page()maps it to a DMA address that is written intoe1000_adv_rx_desc[i].addr. -

Packet arrives — the NIC DMA-writes packet bytes directly into the physical page at

0xABC000. The CPU is not involved; no copy occurs. -

Hard interrupt fires —

igb_msix_ringcallsnapi_scheduleand returns immediately. -

Soft interrupt (

net_rx_action→igb_poll→igb_clean_rx_irq) — readse1000_adv_rx_desc[i]for packet length and status, looks upigb_rx_buffer[i]for thestruct page*of the memory the NIC already wrote into, and builds ansk_buffthat points into that page — still no copy. -

Refill — a new page is allocated and its DMA address is written back into

e1000_adv_rx_desc[i], making the slot ready for the next packet.

8.Registering Interrupt Handlers — igb_request_irq

Registering Interrupt Handlers — igb_request_irq

After the ring buffers are set up, __igb_open calls igb_request_irq to wire the hardware interrupts to their handlers:

Modern Intel NICs support MSI-X (Message Signalled Interrupts Extended), which allows each Rx/Tx queue to raise a distinct interrupt vector, each of which can be affined to a specific CPU core. igb_request_msix registers one handler per vector:

9.References

References

- 張彥飛, 深入理解 Linux 網絡, Broadview