1. Repository

2. What is poll()?

poll()?poll() is a system call for I/O multiplexing that allows a program to monitor multiple file descriptors simultaneously, waiting for one or more to become ready for I/O operations. It's an alternative to select() that addresses some of its limitations.

3. Why Use poll()?

poll()?Problem: A server needs to handle multiple clients, but traditional blocking I/O would freeze the entire server while waiting for data from one client.

Solution: poll() lets us monitor many file descriptors at once, blocking until at least one becomes ready. The kernel handles the waiting efficiently using interrupts, not busy-wait loops.

4. The poll() Function Signature

poll() Function SignatureParameters:

fds: Array ofpollfdstructures describing file descriptors to monitornfds: Number of file descriptors in the arraytimeout: How long to wait in milliseconds-1: Block indefinitely until an event occurs0: Return immediately (polling mode)> 0: Wait up to this many milliseconds

Return Value:

> 0: Number of file descriptors with events0: Timeout occurred, no events-1: Error occurred (checkerrno)

5. The pollfd Structure

pollfd StructureKey Fields:

fd: The file descriptor to watch (socket, file, pipe, etc.)events: Bitmask of events we're interested in (what we set)revents: Bitmask of events that occurred (what kernel sets)

6. Event Flags (Bitmask Values)

Common events flags we set:

POLLIN(0x0001): Data available to readPOLLOUT(0x0004): Ready to write dataPOLLPRI(0x0002): Urgent data available

Flags kernel may set in revents:

POLLIN: Data ready to readPOLLOUT: Ready for writingPOLLERR(0x0008): Error conditionPOLLHUP(0x0010): Hang up (connection closed)POLLNVAL(0x0020): Invalid file descriptor

7. Checking Events with Bitwise Operations

When poll() returns, we check revents using bitwise AND (&) to see which events occurred:

Why bitwise & instead of &&?

Because revents is a bitmask where multiple events can be true simultaneously:

revents = 0x0009means bothPOLLIN(0x0001) andPOLLERR(0x0008) occurredrevents & POLLINchecks if bit 0 is set0x0009 & 0x0001 = 0x0001(true, data ready despite error)

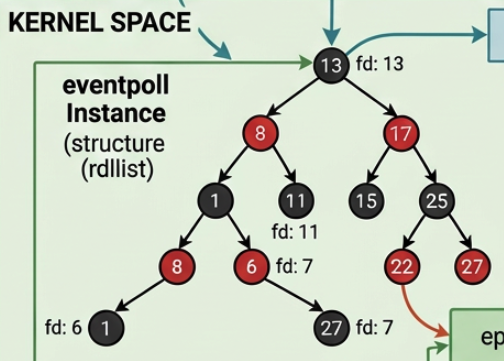

8. How poll() Works Internally

poll() Works Internally8.1.Not a Busy-Wait Loop

Not a Busy-Wait Loop

Despite its name, poll() does not continuously poll in a loop. Instead:

- Process goes to sleep: Your process is put in a wait queue

- Kernel monitors hardware: Network card generates interrupt when data arrives

- Interrupt wakes process: Kernel's interrupt handler wakes your process

- Poll returns: With events filled in

reventsfields

This is event-driven and highly efficient—our process uses zero CPU while waiting.

8.2.The Kernel's Wait Queue Mechanism

The Kernel's Wait Queue Mechanism

9. Complete Server Example Walkthrough

Here's how a TCP server uses poll() to handle multiple clients:

9.1.Setup Socket with Options

Setup Socket with Options

setsockopt() explanation:

- Purpose: Configure socket options

SOL_SOCKET: Operate at socket level (not protocol-specific)SO_REUSEADDR: Allow immediate port reuse after restartopt = 1: Enable the option (0 would disable)

Without SO_REUSEADDR, restarting our server would fail with "Address already in use" for 30-120 seconds (TIME_WAIT period).

9.2.Initialize the pollfd Array

Initialize the pollfd Array

Why MAX_CLIENTS + 1?

- Index 0: The listening socket (accepts new connections)

- Index 1 to MAX_CLIENTS: Connected client sockets

9.3.Main Event Loop

Main Event Loop

Key points:

- We rebuild

fdsarray each iteration with active clients poll(fds, nfds, -1)blocks indefinitely until events occur- When it returns,

n_eventstells us how many fds have events

9.4.Handle New Connections

Handle New Connections

Process:

- Check if listening socket has

POLLINevent accept()the new connection (returns new fd)- Find free slot in our client tracking array

- Store the connection or reject if full

- Decrement event counter

9.5.Handle Client Data

Handle Client Data

Process:

- Loop through client sockets (index 1 onwards)

- Check if each has

POLLINevent (data ready) read()the data from the socket- If

read()returns ≤ 0, client disconnected - Clean up the slot and decrement

nfds

10. poll() vs select() Comparison

poll() vs select() Comparison| Feature | select() | poll() |

|---|---|---|

| Max FDs | Limited to 1024 (FD_SETSIZE) | No fixed limit |

| API | Uses fd_set bitmask | Uses pollfd array |

| Modification | Modifies fd_set (must rebuild) | Separates events/revents |

| Performance | O(n) where n = highest fd | O(n) where n = actual count |

| Clear API | Less intuitive (FD_SET, FD_ISSET) | More straightforward |

When to use poll():

- Need more than 1024 file descriptors

- Want cleaner, more maintainable code

- Don't need to modify the watched set frequently

When to use select():

- Maximum portability (older systems)

- Very few file descriptors

- Timeout precision requirements

11. Common Patterns and Best Practices

11.1.Always Check Return Value

Always Check Return Value

11.2.Use Event Counter Optimization

Use Event Counter Optimization

This lets us exit early once all events are processed instead of checking every fd.

11.3.Handle POLLHUP and POLLERR

Handle POLLHUP and POLLERR

11.4.Initialize Unused Slots

Initialize Unused Slots

Setting fd = -1 tells poll() to ignore that array entry.

12. Memory and Performance Considerations

Advantages:

- No fixed FD limit like

select() - Only loops through fds we actually registered

- Kernel efficiently uses interrupts, not busy-waiting

- Separates input (

events) from output (revents)

Disadvantages:

- Still O(n) scan through all fds on each call

- Not as efficient as

epoll()(Linux) orkqueue()(BSD) for thousands of connections - Must rebuild array if fd set changes

13. Modern Alternatives

For servers handling thousands of connections:

- Linux:

epoll()- O(1) performance for ready fds - BSD/macOS:

kqueue()- Similar to epoll - Windows: IOCP (I/O Completion Ports)

- Cross-platform:

libeventorlibuvlibraries

But poll() is perfect for learning multiplexing concepts and handles hundreds of connections efficiently.

14. Summary

-

poll()monitors multiple file descriptors for I/O readiness -

Uses

pollfdarray withfd,events, andreventsfields -

Kernel puts process to sleep and wakes it via interrupts (not busy-wait)

-

Check events using bitwise AND:

revents & POLLIN -

Addresses

select()'s limitations with cleaner API and no fd limit -

Ideal for servers with dozens to hundreds of concurrent connections

-

Event-driven design enables efficient concurrent I/O handling